S3cmd is a free open-source command-line tool and client for uploading, retrieving and managing data S3-compliant object storages. It’s a powerful tool for advanced users who are familiar with command-line programs but is also simple enough for beginners to learn quickly. It is also great for automation using, for example, shell scripts or cron.

Object Storage S3 access details

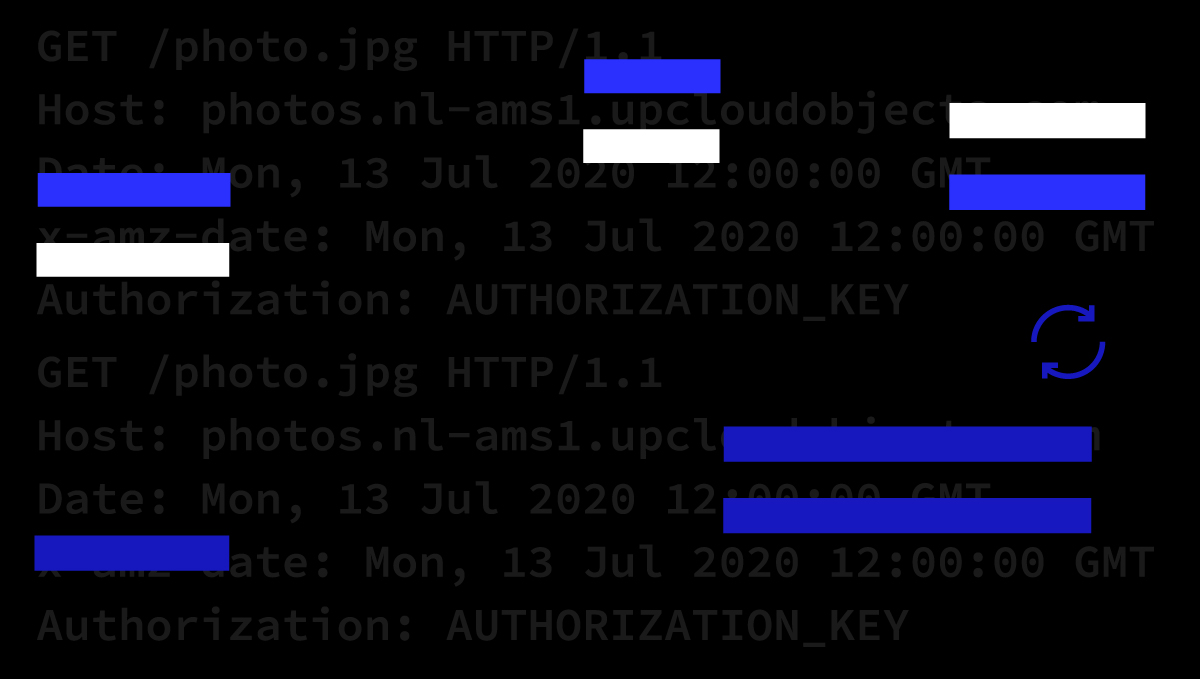

UpCloud Object Storage is fully S3-compliant meaning any existing S3 client you prefer should be able to connect and access your Object Storage. To do so, you will need to configure your S3 client to be able to authenticate with the storage.

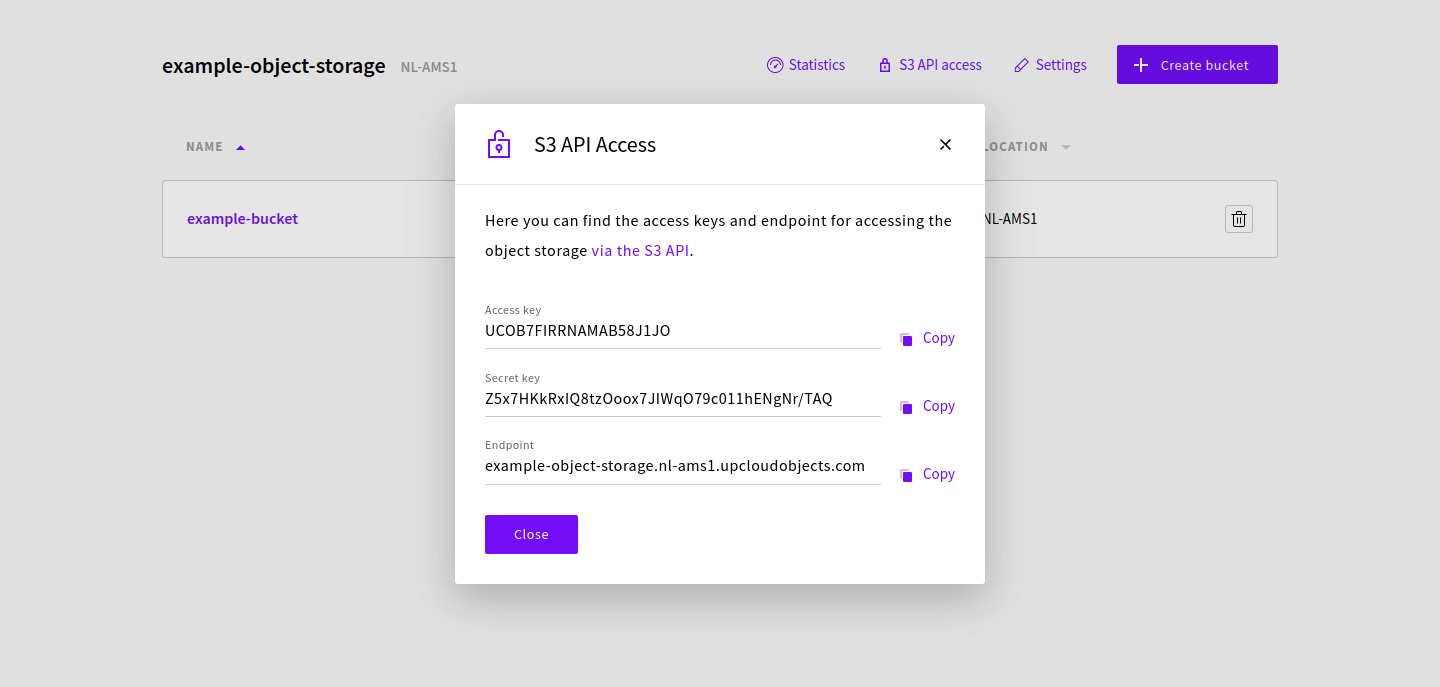

You can find the access details for your Object Storage at your UpCloud Control Panel

The Object Storage access keys can also be retrieved using the API request below. Replace the {uuid} with the unique identifier of your Object Storage device.

Request

GET /1.3/object-storage/{uuid}

Response

HTTP/1.0 201 OK

{

"object_storage": {

"uuid": "063881d4-edc5-4102-8214-9b7e11edb9cb",

"name": "example-object-storage",

"description": "example-object-storage",

"zone": "nl-ams1",

"url": "https://example-object-storage.nl-ams1.upcloudobjects.com/",

"access_key": "UCOB7FIRRNAMAB58J1JO",

"secret_key": "Z5x7HKkRxIQ8tzOoox7JIWqO79c011hENgNr/TAQ",

"quota_gb": 500,

"used_gb": 0,

"state": "started",

"created": "2020-07-27T07:36:45Z"

}

}Make note of your Object Storage’s endpoint url, access_key and sercret_key which are needed to be able to connect using an S3 client.

Installing S3cmd

Thanks to its ease of automation, S3cmd is a good option for managing your Object Storage from your cloud server. You can install S3cmd on most Linux distributions using pip, the package installer for Python programming language.

First, check that Python is installed and available.

# Ubuntu and Debian sudo apt-get install python3 python3-distutils -y # CentOS sudo yum install python3 -y

Next, download the pip install script.

wget https://bootstrap.pypa.io/get-pip.py -O ~/get-pip.py

Then install pip using the following command.

sudo python3 ~/get-pip.py

Finally, install S3cmd using pip.

sudo pip install s3cmd

That’s it! Now that S3cmd is installed, you’ll need to configure it to connect to your Object Storage. Continue below with the steps on how to accomplish this.

Configuring S3cmd

S3cmd is configured to connect to a single Object Storage at the time by creating a configuration file. It contains all the necessary keys and details needed to manage buckets and files on the Object Storage.

Run the following command to use the configuration script.

s3cmd --configure

Then enter the required details in the order they appear. The values highlighted below are examples, use your own keys and endpoint URL as described in the first section of this guide. Also, set a password for file transfer encryption. Other fields left empty in the example below can be skipped by simply pressing Enter key to use the default value.

Then at the end, confirm to save settings.

Access Key: UCOB7FIRRNAMAB58J1JO Secret Key: Z5x7HKkRxIQ8tzOoox7JIWqO79c011hENgNr/TAQ Default Region [US]: S3 Endpoint [s3.amazonaws.com]: example-object-storage.nl-ams1.upcloudobjects.com DNS-style bucket+hostname:port template for accessing a bucket [%(bucket)s.s3.amazonaws.com]: %(bucket).example-object-storage.nl-ams1.upcloudobjects.com Encryption password: password Path to GPG program [/usr/bin/gpg]: Use HTTPS protocol [Yes]: HTTP Proxy server name: ... Test access with supplied credentials? [Y/n] Please wait, attempting to list all buckets... Success. Your access key and secret key worked fine :-) Now verifying that encryption works... Success. Encryption and decryption worked fine :-) Save settings? [y/N] y Configuration saved to '/home/user/.s3cfg'

Once configured, you are ready to start working on your Object Storage!

If you want to be able to access multiple Object Storage devices from the same system, you can create .s3cfg configuration files for each and run S3cmd with the -c parameter.

s3cmd -c /path/to/.s3cfg

Managing buckets

Now that you have S3cmd configured, test out a few commands to see how it works.

All data in Object Storage is organised in “buckets” of objects.

To start with, create a new bucket with the following command. Replace the example-bucket with whatever you want to name your bucket.

s3cmd mb s3://example-bucket

Bucket 's3://example-bucket/' created

Once you’ve created a bucket, you can confirm it by listing the buckets in your Object Storage.

s3cmd ls

2020-07-26 16:34 s3://example-bucket

If you want to remove a certain bucket, you can delete it by using the following command.

s3cmd rb s3://example-bucket

Bucket 's3://example-bucket/' removed

Note that all objects must be placed within a bucket. Create a new bucket if you followed the above and deleted the example bucket then continue in the next section on how to transfer files to and from the Object Storage.

Managing files

S3cmd follows common file repository terminology in the object operations. It refers to copying files into the Object Storage as “put” and downloading files from the Object Storage as “get”. You can test out the various commands by using the example commands as described in this section.

Put object

Once you’ve created your bucket, put a file or files into it with the following command.

s3cmd put FILE [FILE...] s3://example-bucket[/PREFIX]

You can transfer any number of files at the same time by simply listing them separated by a space. You can also further organise the files into groups by assigning an optional prefix.

touch ~/example.txt s3cmd put ~/example.txt s3://example-bucket

List objects

Once copied into the Object Storage, it can be found by listing the files within the target bucket.

s3cmd ls s3://example-bucket

2020-07-26 20:53 0 s3://example-bucket/example.txt

Get object

Getting objects from buckets follows the same logic as put operations but in reverse order.

s3cmd get s3://BUCKET/OBJECT LOCAL_FILE

Get the same example.txt but rename it during copying by using the next command.

s3cmd get s3://example-bucket/example.txt ~/example2.txt

Copy object

You can also copy files between buckets within the same Object Storage without having to save the object elsewhere.

s3cmd cp s3://BUCKET1/OBJECT1 s3://BUCKET2/OBJECT2

First, create a new bucket, then copy the example.txt into it.

s3cmd mb s3://new-bucket s3cmd cp s3://example-bucket/example.txt s3://new-bucket/

Move object

There’s also an option to move objects between buckets which works much like the copy operation above.

s3cmd mv s3://BUCKET1/OBJECT1 s3://BUCKET2[/OBJECT2]

The following command will move the example.txt to the new bucket and rename it to example2.txt.

s3cmd mv s3://example-bucket/example.txt s3://new-bucket/example2.txt

List all objects

Afterwards, you can check the files in all buckets by using the list all command as shown below.

s3cmd la

2020-07-26 21:00 0 s3://new-bucket/example.txt 2020-07-26 21:00 0 s3://new-bucket/example2.txt

Delete object

Naturally, it’s also possible to delete files when they are no longer needed.

s3cmd rm s3://BUCKET/OBJECT

Once done, delete example files from the new bucket with the following command.

s3cmd rm s3://new-bucket/example.txt s3://new-bucket/example2.txt

Afterwards, both of your test buckets should be empty and can be deleted as well.

Other operations

Disk usage

Provides information about storage usage by bucket

s3cmd du [s3://BUCKET[/PREFIX]]

Bucket and file info

Used to get information about buckets or files

s3cmd info s3://BUCKET[/OBJECT]

Modify metadata

Can be used to change the object metadata

s3cmd modify s3://BUCKET1/OBJECT

Check the full list of supported Object Storage operations by using the help command.

s3cmd --help