Containers are by no means a new thing at this point. However, their popularity is certainly not declining thanks to the convenience and ease of use they offer. Just about any app can be containerized for quick scalability but are you compromising on something when doing so? What should you expect for container performance due to the additional virtualization layer? The only way to find out is to test it yourself!

While it’s easy to compare providers on simple benchmark results, getting a more in-depth look into the cost and performance metrics takes a bit more effort. Throw containers into the mix and you have quite the equation to balance out. Therefore, to get a better understanding of the costs and benefits of containers, we decided to put some container configurations through their paces.

Containers in the cloud

The move towards cloud infrastructure has been long supported in part by the savings offered by competitive pricing and reduced administrative overhead. Users can achieve further cost reductions by making efficient use of their resources and containers can provide a convenient method for fully utilising cloud servers. But too much of any good thing can be to the detriment of its benefits.

One of the main selling points of containers is the ability to isolate the applications running in separate containers without adding too much in the way of overhead. However, it’s a commonly held belief that containers impose performance costs. Due to the extra layer of virtualization, containerized applications might not achieve quite the same performance as those running natively on the OS. This was still the case some years ago but technology has come a long way since.

As self-hosting server hardware is becoming rarer, containers more often run on top of already virtualized systems such as cloud servers. While each cloud server runs a full guest operating system, containers share the same kernel between themselves and the cloud host. Then again, thanks to the flexibility of the cloud, you can easily deploy any number of cloud servers with varying configuration.

The question then turns to the balance between the numbers of individual cloud servers and hosted containers. Although the final infrastructure from a single container’s the point of view might be mostly the same, the application scalability and performance can differ. Therefore, it becomes ever more important to validate the optimal number of containers per cloud server resources.

Testing the theory

It would be a simple task to just deploy the largest configuration available and test how many containers you can stuff into it. However, the number of resources a single container can utilise will depend largely on the containerized application. That is why, instead of attempting to simulate a real-world use case, we decided to fully stress the servers by running a varying number of concurrent CPU benchmarks within Docker containers.

To save time and manual work, the cloud servers were deployed using Terraform to create a clean environment for each run. Then as we needed to be able to quantify the container performance objectively, we selected to use Sysbench to run CPU benchmarks. Although Sysbench does not offer a ready-made container, it was a simple task of building one using the latest Ubuntu container along with the appropriate packages. Each server was also benchmarked running Sysbench natively on the OS to create a baseline.

The results speak well for the performance improvement attained by containerized application. Containers were able to reach performance numbers within the margin of error of the uncontainerized natively run baseline. Below you can see example performance numbers per container per number of containers on UpCloud. The number of threads per container was adjusted according to the total number of containers to fully utilise each server configuration, oversubscribing where necessary.

| Performance per container (events/s) | |||||||||

|---|---|---|---|---|---|---|---|---|---|

| Native | 1 | 2 | 4 | 6 | 8 | 12 | 16 | 20 | |

| 1CPU-2GB | 410.05 | 409.76 | |||||||

| 2CPU-4GB | 803.52 | 803.33 | 400.33 | ||||||

| 4CPU-8GB | 1640.29 | 1648.11 | 829.04 | 410.42 | |||||

| 6CPU-16GB | 2465.24 | 2616.64 | 1316.46 | 724.98 | 414.1 | ||||

| 8CPU-32GB | 3895.53 | 3842.9 | 1928.47 | 959.51 | 658.08 | 487.61 | |||

| 12CPU-48GB | 5921.93 | 5881.14 | 2963.14 | 1440.09 | 988.49 | 707.62 | 493.95 | ||

| 16CPU-64GB | 7814.03 | 7256.47 | 3924.92 | 1973.86 | 1309.65 | 981.08 | 705.12 | 494.38 | |

| 20CPU-96GB | 9896.47 | 9899.97 | 4949.09 | 2474.39 | 1639.14 | 1259.72 | 895.73 | 685.98 | 494.87 |

We at UpCloud have always had a big focus on performance and we stand by our slogan of offering the world’s fastest cloud servers. Therefore we did not shy away from comparing our servers with some of the most popular cloud providers. The tests were run on a long list of different cloud server configurations from DigitalOcean, AWS, Linode, and of course, UpCloud.

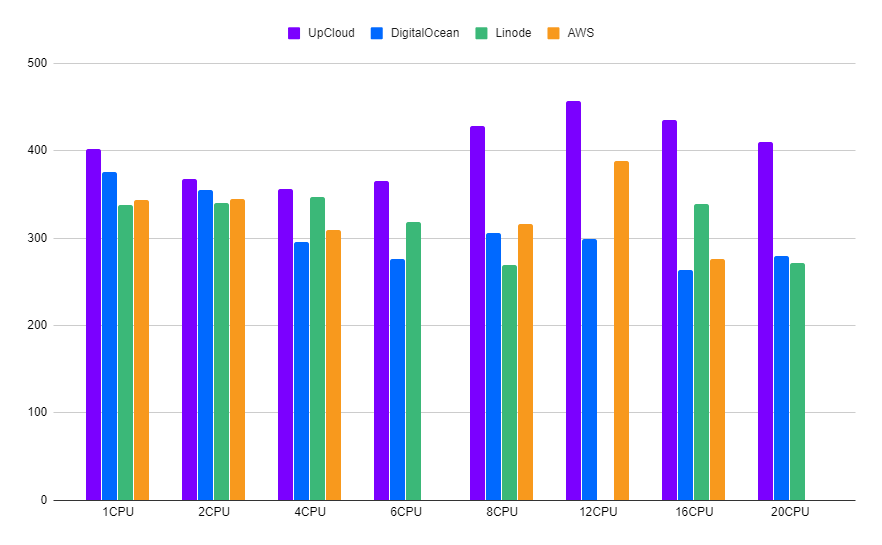

Single-core performance for containers immediately reflects the power differences between the providers. Our cloud servers are consistently able to edge out better single-threaded performance regardless of the number of CPU cores allocated to the system. Note that Linode and AWS do not offer all the same configuration options as DigitalOcean and UpCloud and are therefore absent in certain results.

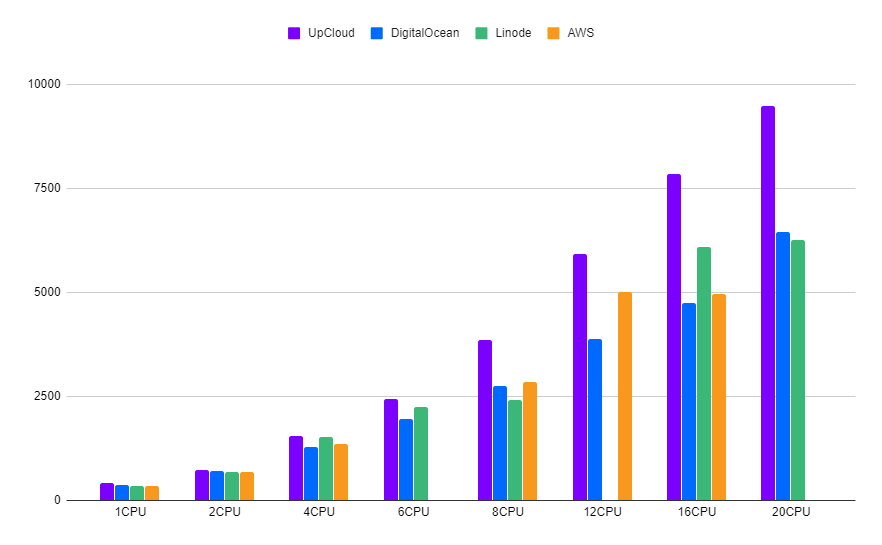

Consequently, multi-core performance in containers follows the same trend. While smaller configurations remain relatively closely grouped, UpCloud attains a clear lead as the number of usable CPU cores increases.

Although containers are certainly nothing magical, just as other multithreaded workloads, containerized apps can be more efficient in using resources from the host system. Depending on how continuously each container utilises the available resources, it might be advantageous to even oversubscribe the server. This, of course, has the downside of reducing the performance available to individual containers.

The take-away

One of the advantages of containerized applications is the possibility of running multiple instances of the same software on a single cloud server. This allows you to increase the number of threads even if the application itself is not multithreaded, as long as the final use case supports it. You then have the option to split the expected workload between multiple cloud servers by deploying a cluster. Furthermore, as we’ve shown above, containers are fully capable of providing competitive performance should your application need it.

Thanks to the fixed monthly pricing options on most popular cloud providers, the expected resource costs are easily calculated. Therefore, it’s possible to plan your infrastructure around the optimal number of multithreaded applications per cloud server resources without letting the costs get out of hand. Additionally, by carefully planning your server configurations, you might also be able to save on your infrastructure costs by optimizing the resource split between your cloud servers.

Although the scope of these benchmarks did not include stress tests to a point that would have run into bottlenecks, certain limitations of the container technology could pose restrictions. Mainly, as containers share the underlying kernel, some workloads might become constricted by the shared resources. The effects of kernel bottlenecks are further discussed at Hackernoon.com in an article investigating low performance in containers.

Not on UpCloud yet? Sign up for a free trial!

Benchmark our cloud servers for yourself.